Real-Time CDC Pipelines from PostgreSQL to Snowflake

Glenn Gillen

VP of Product, GTM

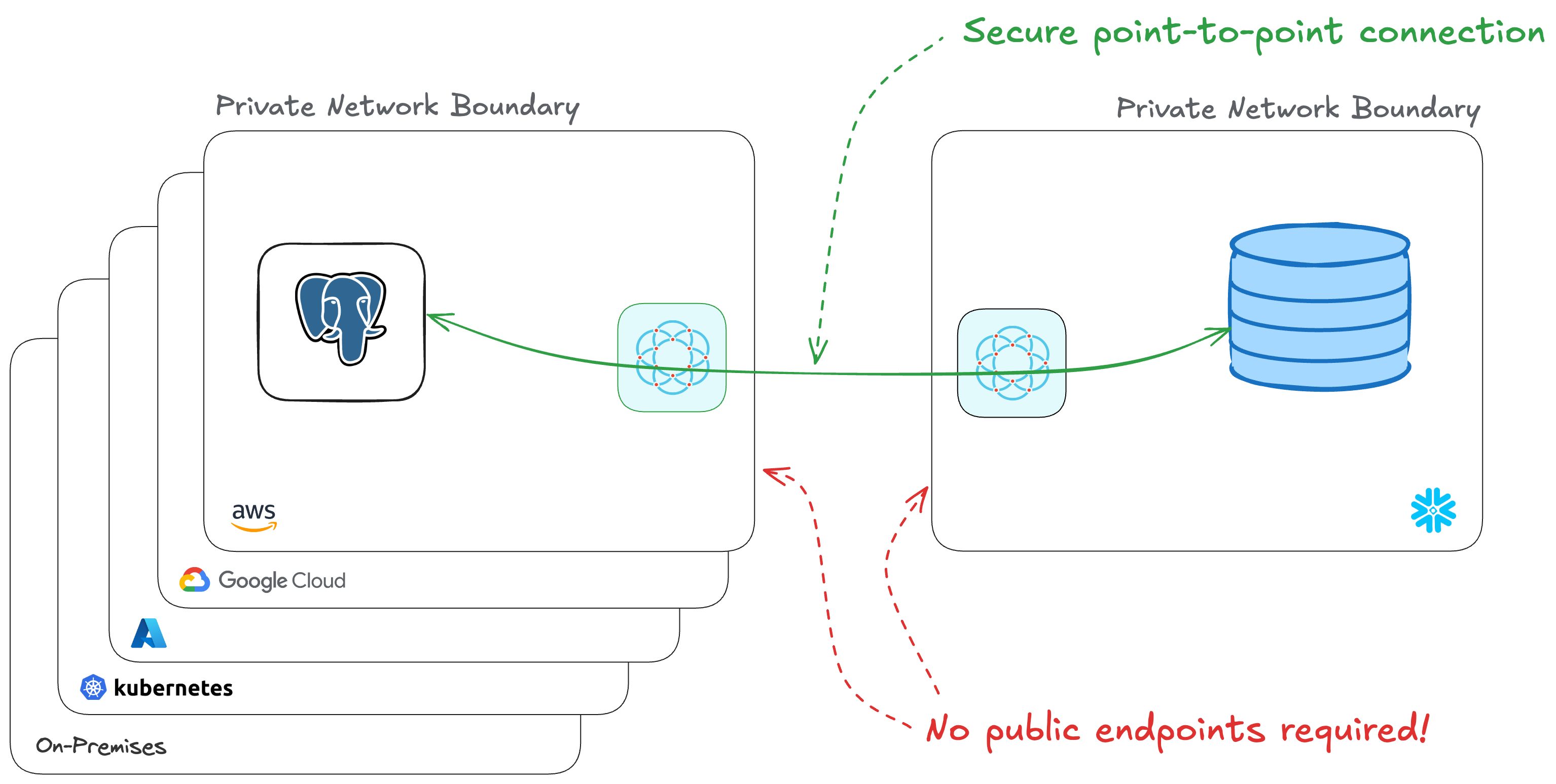

What if I told you there was a way to use Change Data Capture (CDC) to stream all those insights you've got in your private PostgreSQL Database, in real-time, back to Snowflake? That it'll use private point-to-point connections that don't require you to manage IP allow lists, open firewall ports, or setup services like PrivateLink, and that it will take you less than 15 minutes to setup!

Introducing the Snowflake Pull to PostgreSQL Connector!

Snowflake 💙 PostgreSQL

PostgreSQL is a powerful, open-source relational database system that's widely used for storing and managing structured data. It's often the backbone of many applications, holding critical operational data. However, getting this data into a centralized analytics platform can be challenging.

Snowflake is The Data Cloud and the place to support workloads such as data warehouses, data lakes, data science / ML / AI, and even cybersecurity. This centralization brings a huge amount of convenience through breaking down data silos and allowing teams to make smart data-informed decisions.

Connecting to your PostgreSQL database can be problematic depending on your network topology. It would be convenient to give the database a public address, but that's a significant increase in risk for a system that handles a lot of important data. Managing IP allow lists and updating firewall ingress rules improves security but can be cumbersome to manage. Alternatives like PrivateLink are better, but they too can be cumbersome to setup and require your systems to be on the same public cloud and in the same region.

In this post I'm going to show you how to securely connect Snowflake to your private PostgreSQL database, in just a few minutes. We will:

- Setup a PostgreSQL database in AWS with CDC enabled

- Connect PostgreSQL to Snowflake with a private encrypted connection

- Configure Snowflake to receive and store the data from PostgreSQL

Amazon Relational Database Service (RDS) for PostgreSQL

We're going to provision an Amazon RDS PostgreSQL Database so we can see an end-to-end experience of data moving from Snowflake to PostgreSQL. If you have an existing PostgreSQL database you're able to use you can skip this step.

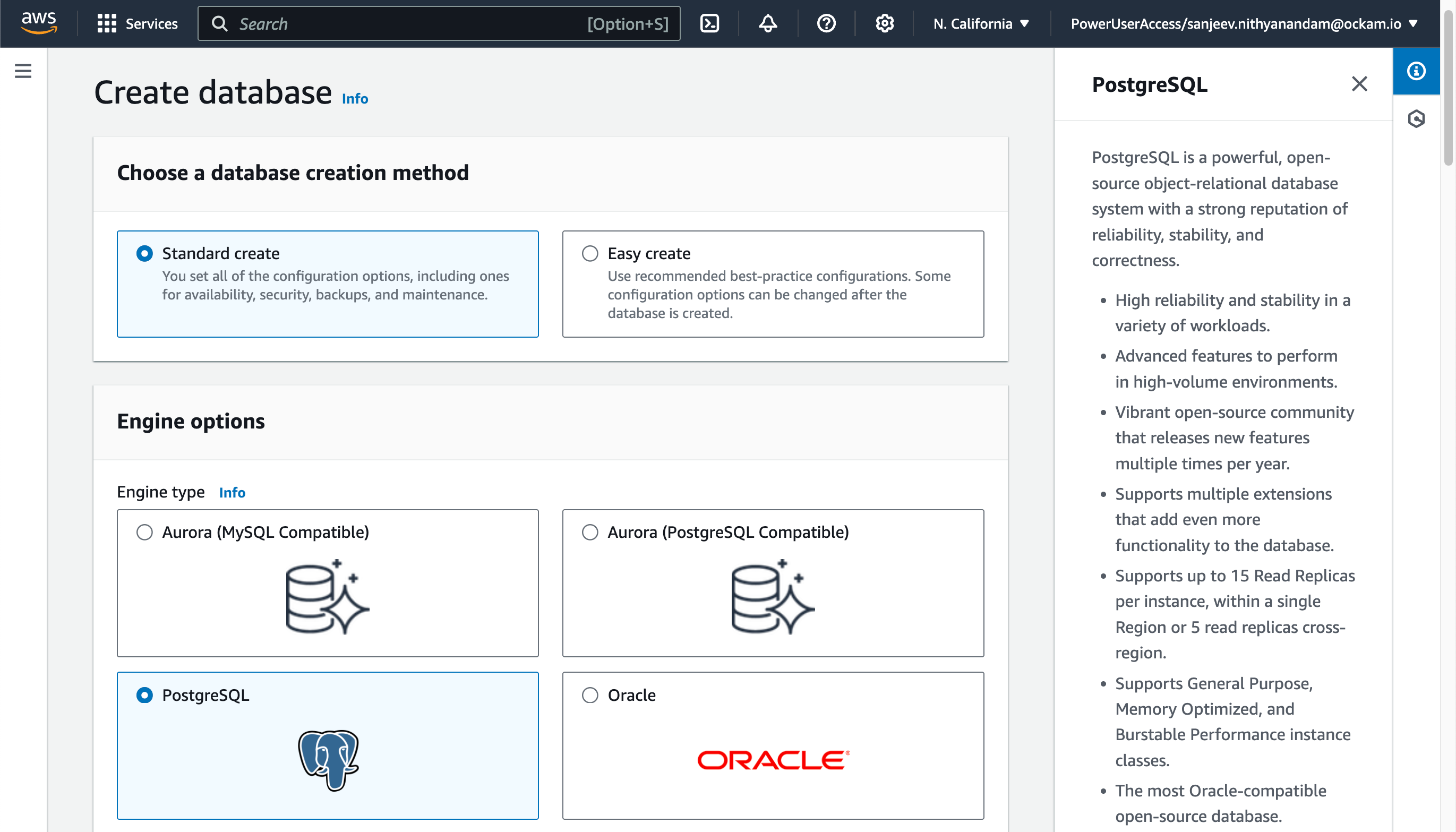

Create a PostgreSQL database

Within your AWS Console search for

RDS in the search field at the top and select the matching result. Visit the

Databases screen, and then click Create Database.

The Standard Create option provides a good set of defaults for creating a

RDS Database, so unless you've previous knowledge or experience to know you

might want something different I'd suggest choosing "PostgreSQL" and confirming the details and

then clicking Create database at the bottom of the screen.

Once you've started the database creation it may take about 15 minutes for provisioning to complete and for your database to be available.

Enable CDC on PostgreSQL

To enable Change Data Capture (CDC) on your PostgreSQL database, you'll need to modify some configuration parameters. In the RDS console:

- Go to the "Configuration" tab of your RDS instance

- Click on the parameter group linked to your instance

- Modify the following parameters:

- Set

rds.logical_replicationto 1

- Set

- Save changes and reboot your RDS instance

Create a table

Create a table in your PostgreSQL database to store the data that will be replicated to Snowflake. In addition, you may also need to set the replica identity to FULL for the table.

_10CREATE TABLE public.customers_10(_10 id INTEGER,_10 customer_name VARCHAR_10);_10_10ALTER TABLE customers REPLICA IDENTITY FULL;

Connect PostgreSQL to Snowflake

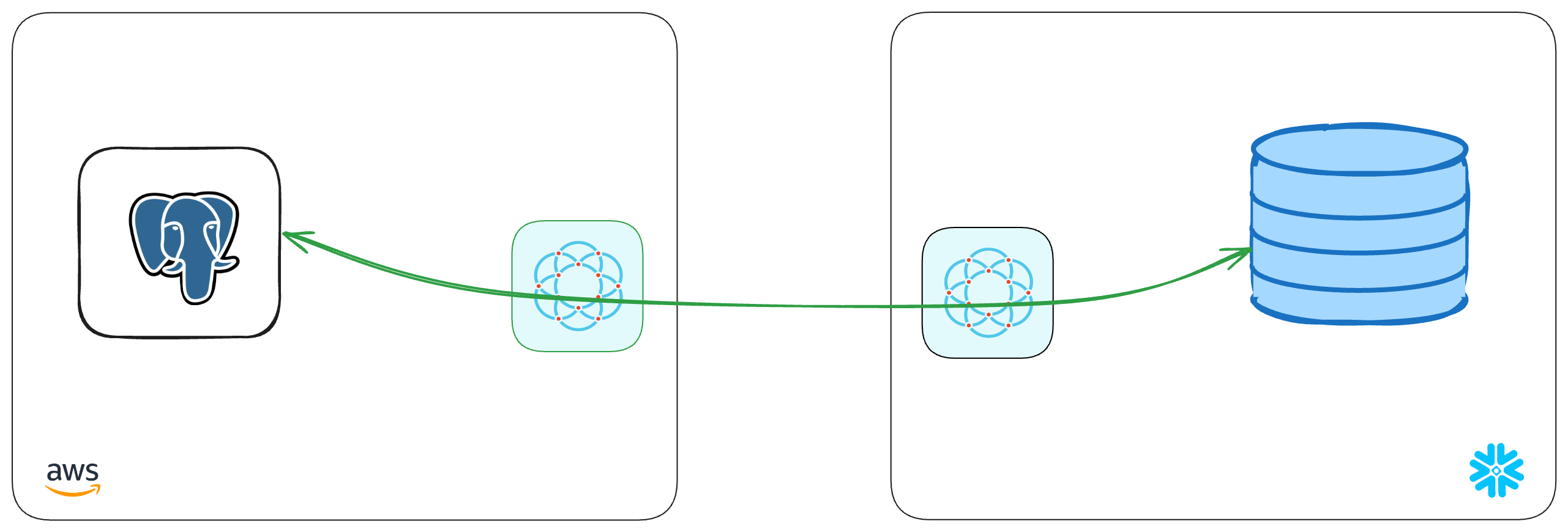

We've created a PostgreSQL database with CDC enabled. It's now time to connect it to Snowflake! The next stage is going to complete the picture below, creating a point-to-point connection between the two systems — without the need to expose any systems to the public internet!

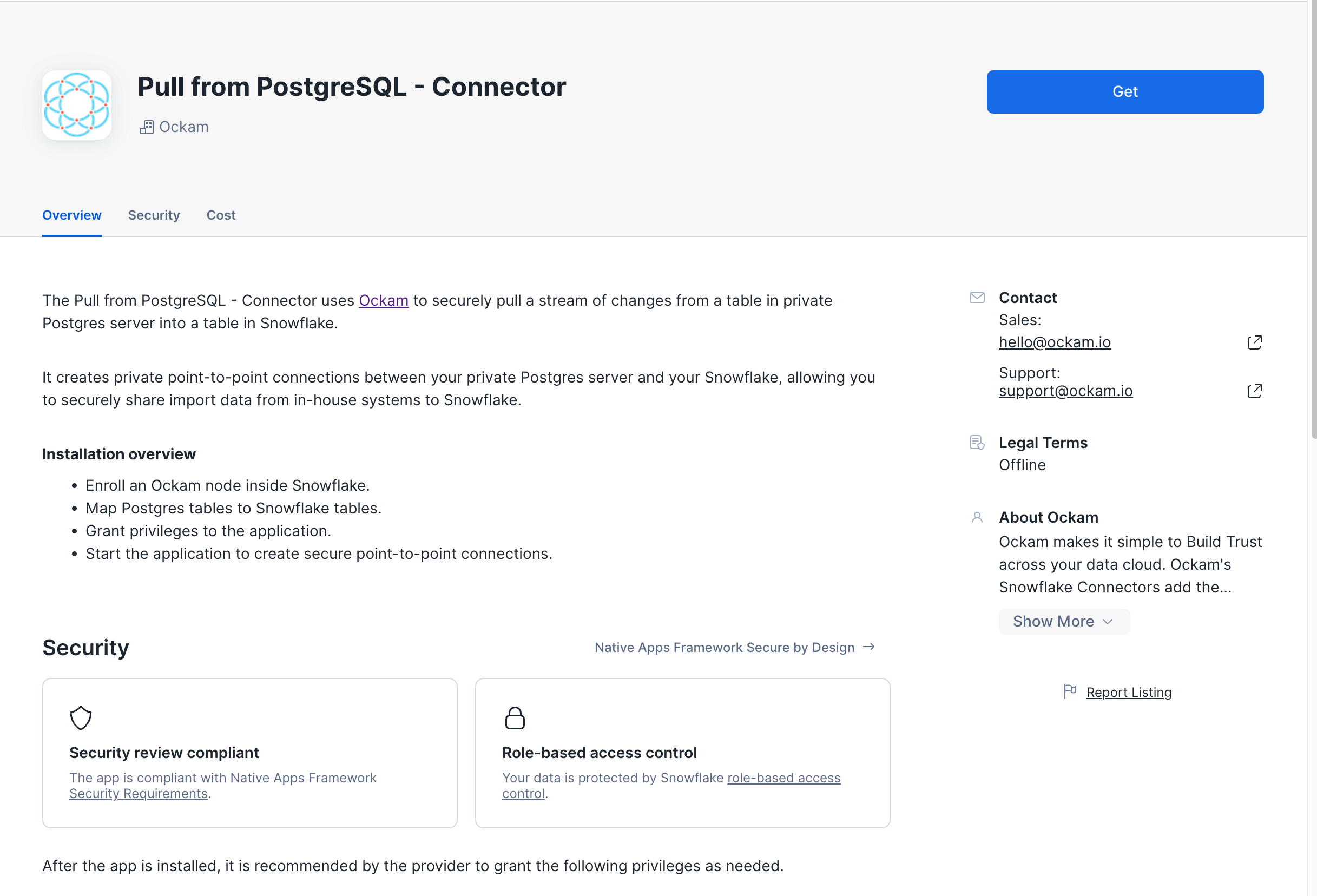

Get the app

The Snowflake Pull from PostgreSQL Connector by Ockam is available in the Snowflake Marketplace.

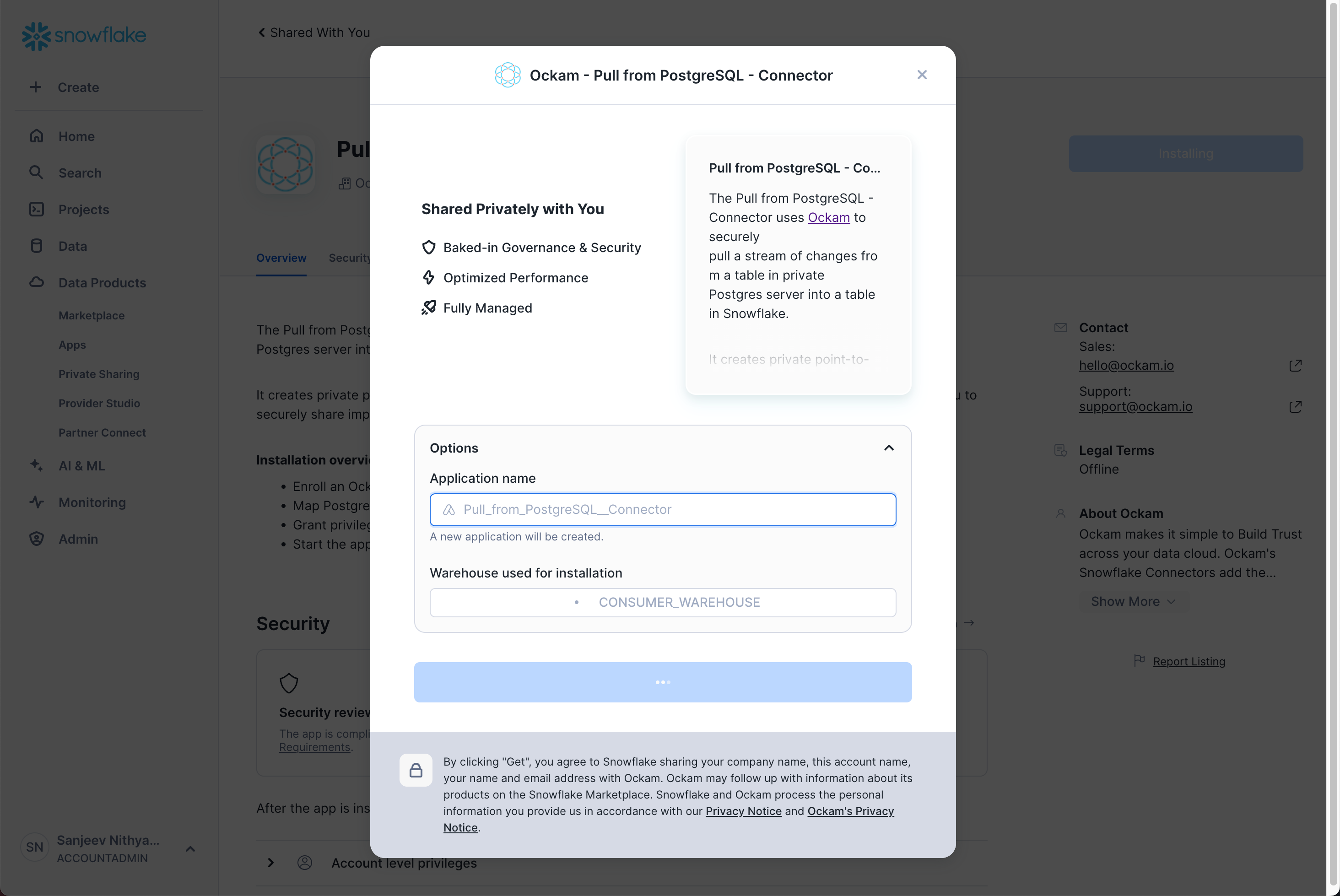

Select a warehouse

The first screen you're presented with will ask you to select the warehouse to utilize to activate the app and can choose to change the application name.

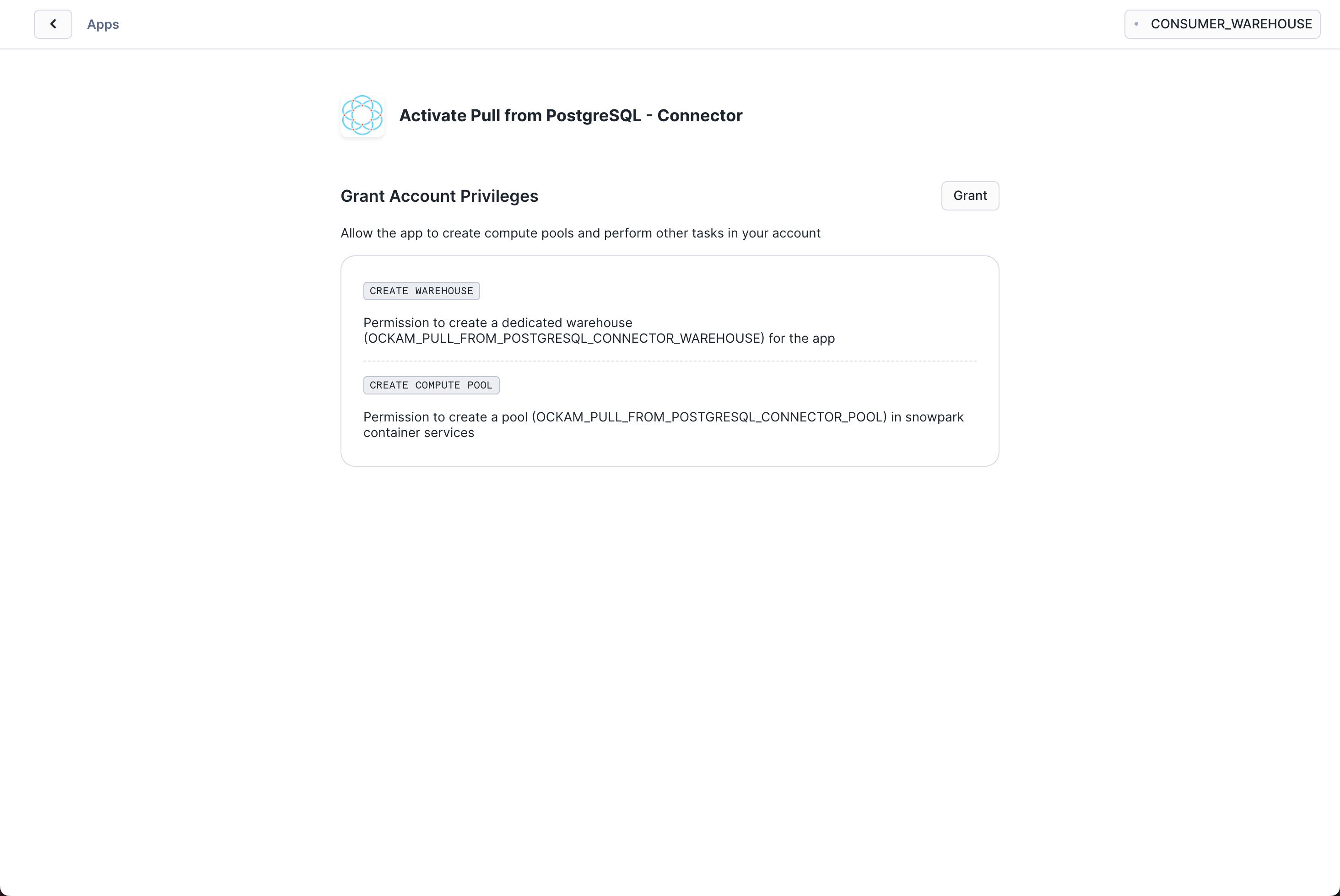

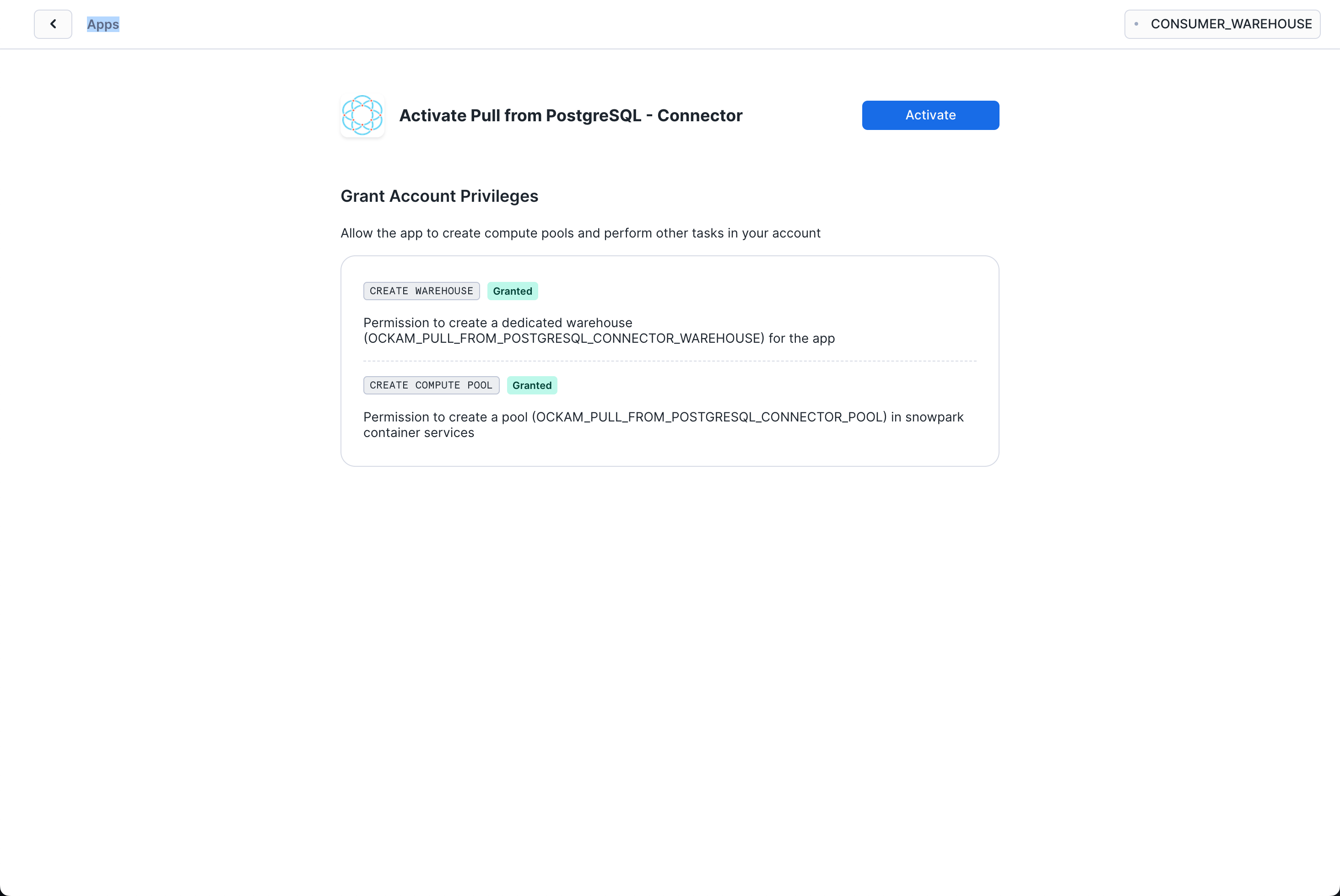

Grant account privileges

Click the Grant button to the right of this screen. The app will then be

automatically granted permissions to create a warehouse and create a compute

pool.

Activate app

Once the permissions grants complete, an Activate button will appear. Click

it and the activation process will begin.

Launch app

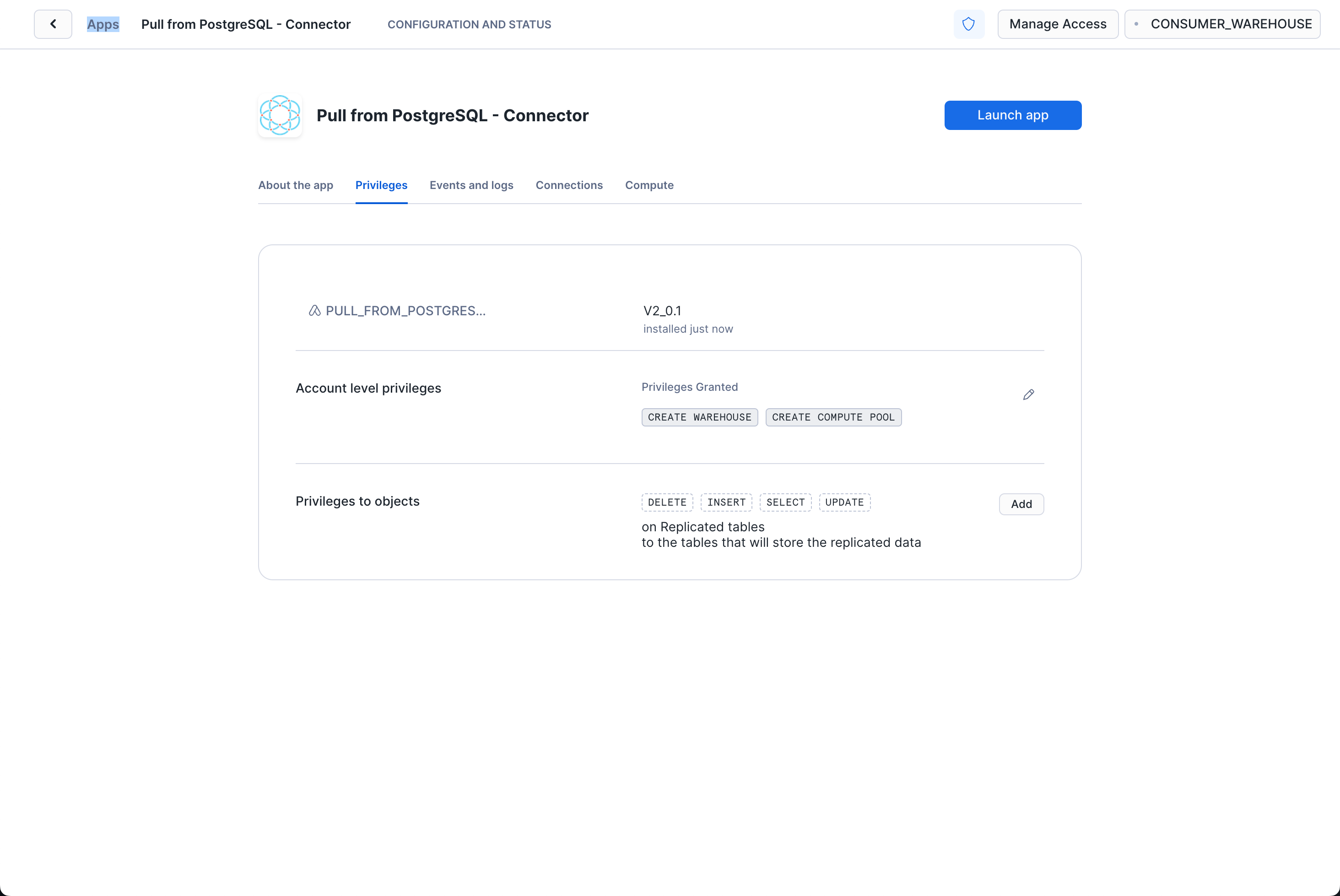

After the app activates you'll see a page that summarizes the

privileges that the application now has. There's nothing we need

to review or update on these screens yet, so proceed by clicking the Launch app button.

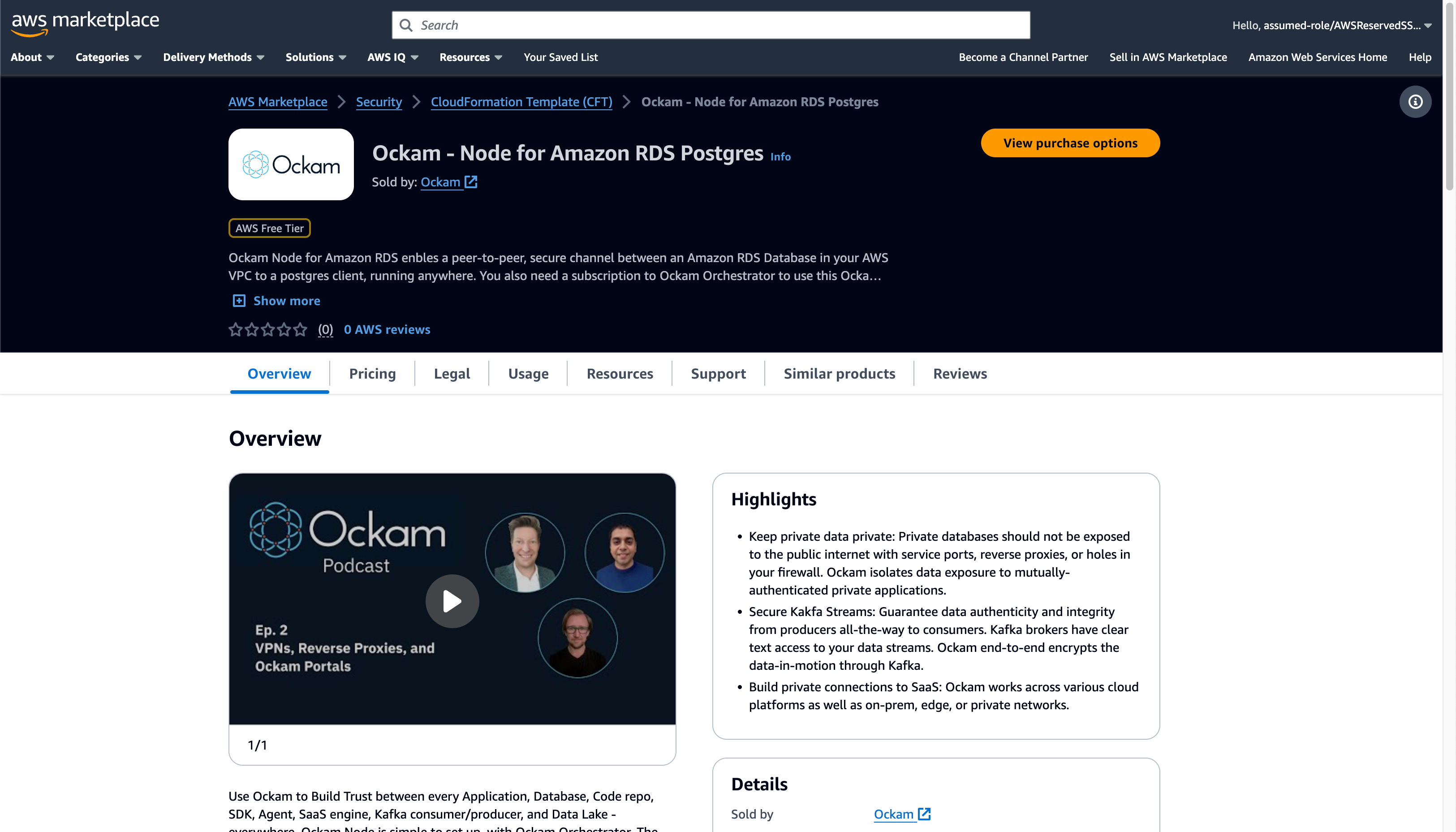

Launch Ockam node for Amazon RDS

The Ockam Node for Amazon RDS is a streamlined way to provision a managed Ockam Node within your private AWS VPC.

To deploy the node that will allow Snowflake to reach your Amazon RDS PostgreSQL database visit

the Ockam Node for Amazon RDS PostgreSQL listing in the AWS Marketplace, and click the Continue to Subscribe button, and then

Continue to Configuration.

On the configuration page choose the region that your Amazon RDS cluster is

running in, and then click Continue to Launch followed by Launch.

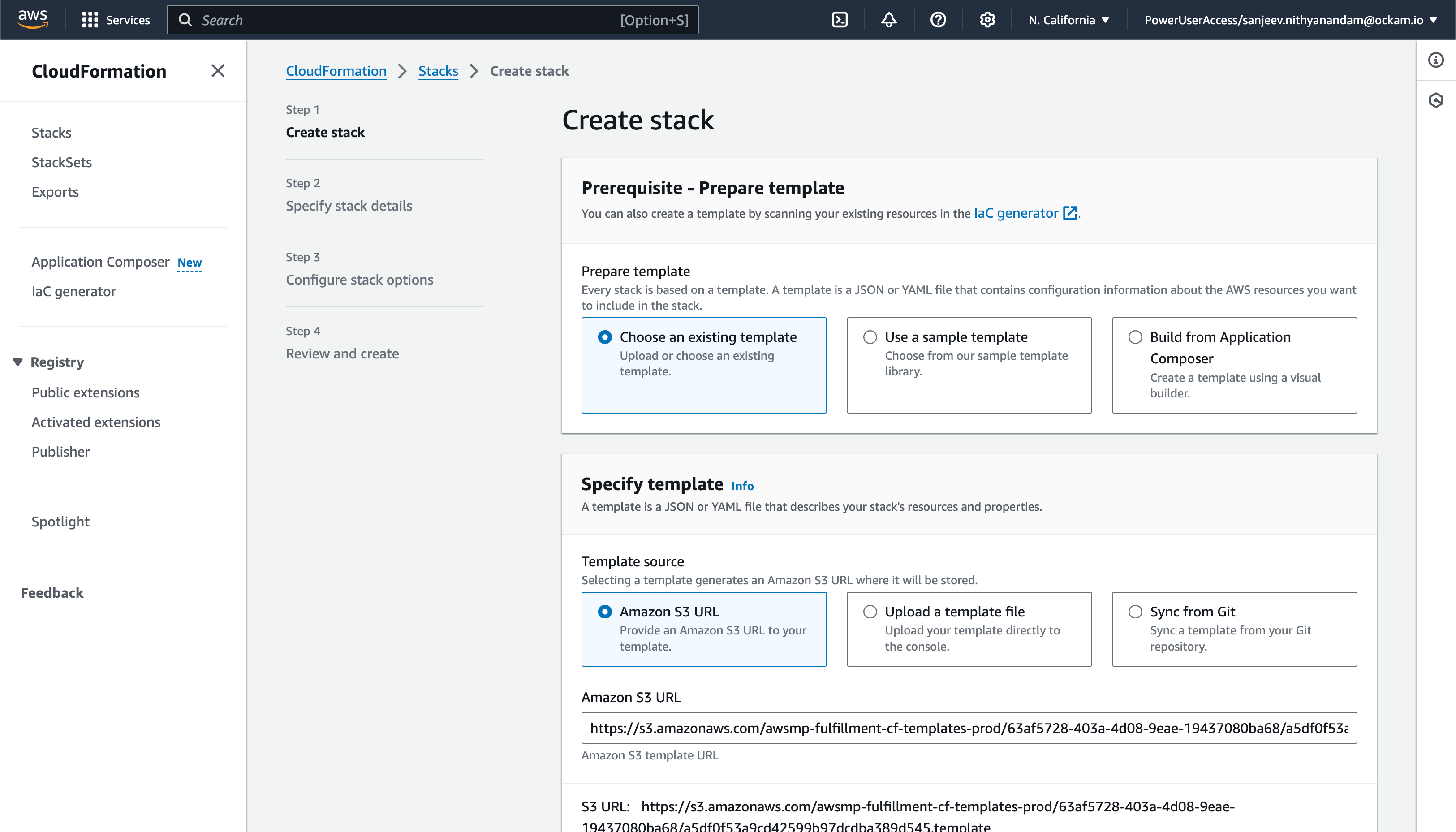

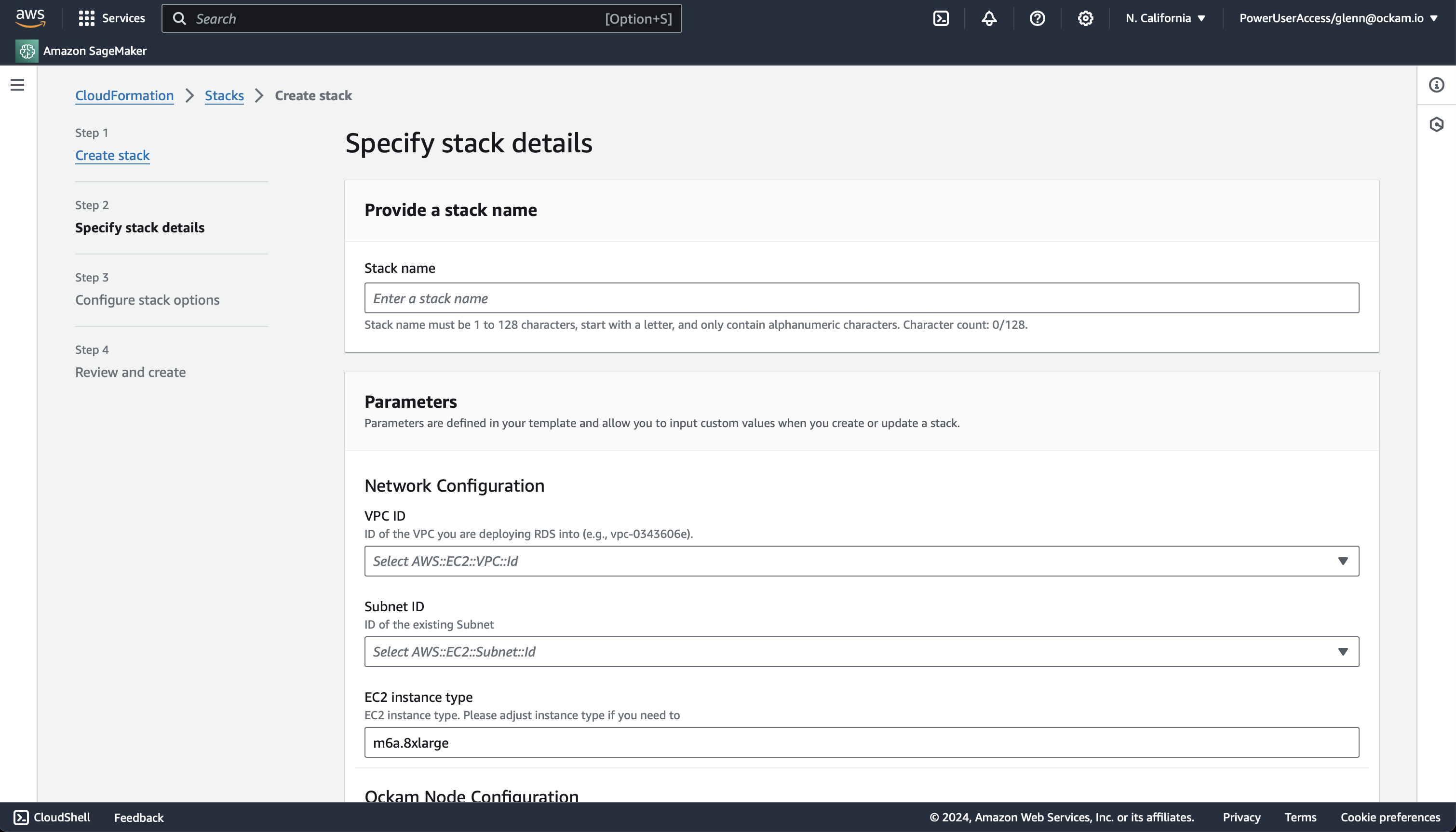

Enter stack details

The initial Create Stack screen pre-fills the fields with the correct

information for your node, so you can press Next to proceed.

Enter node configuration

This screen has important details to you need to fill in:

- Stack name: Give this stack a recognisable name, you'll see this in various locations in the AWS Console. It'll also make it easier to clean these resources up later if you with to remove them.

- VPC ID: The ID of the Virtual Private Cloud network to deploy the node in. Make sure it's the same VPC where you've deployed your Amazon MSK cluster.

- Subnet ID: Choose one of the subnets within your VPC, ensure MSK has a broker address available in that subnet.

- Enrollment ticket: Copy the contents of the

postgres.ticketfile we created earlier and paste it in here. - RDS PostgreSQL Database Endpoint: In the Connectivity & security for your Amazon RDS Database you will find Endpoint details. Copy the

Endpointvalue for the Private RDS Database that's in the same subnet you chose above. - JSON Node Configuration: Copy the contents of the

postgres.jsonfile we created earlier and paste it in here.

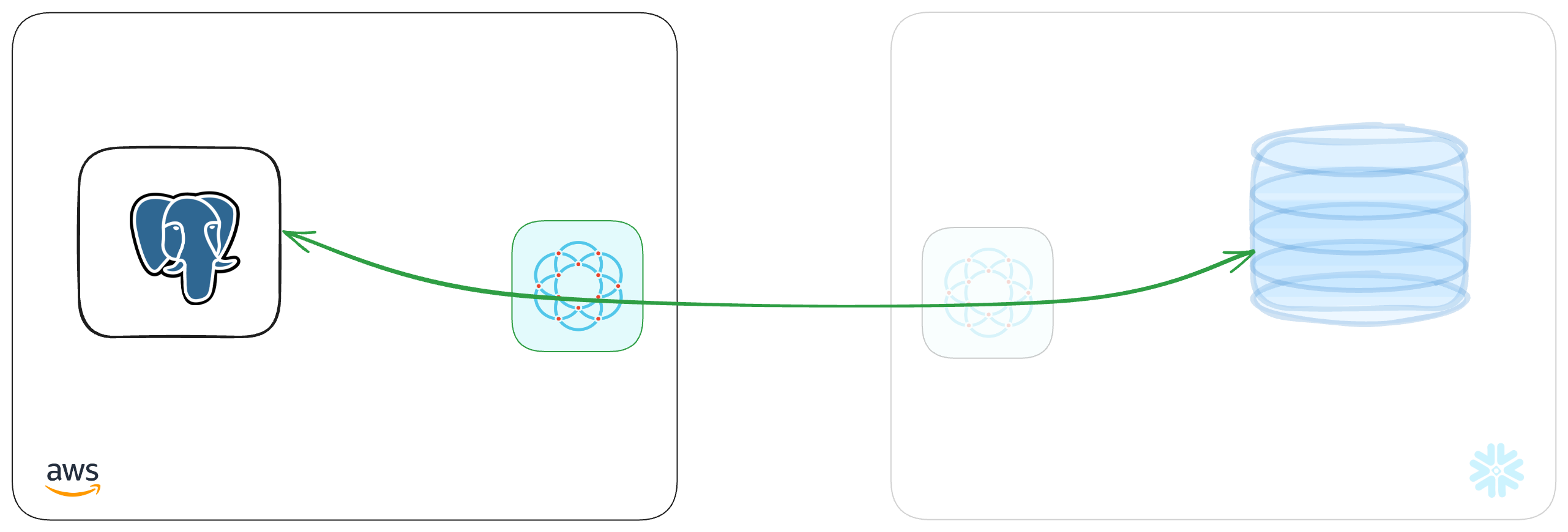

We've now completed the highlighted part of the diagram below, and our Amazon RDS PostgreSQL node is waiting for another node to connect.

Create a table in Snowflake

Create a table in Snowflake to store the data that will be replicated from PostgreSQL.

_10CREATE OR REPLACE TABLE customers (_10 id INTEGER,_10 customer_name VARCHAR_10);

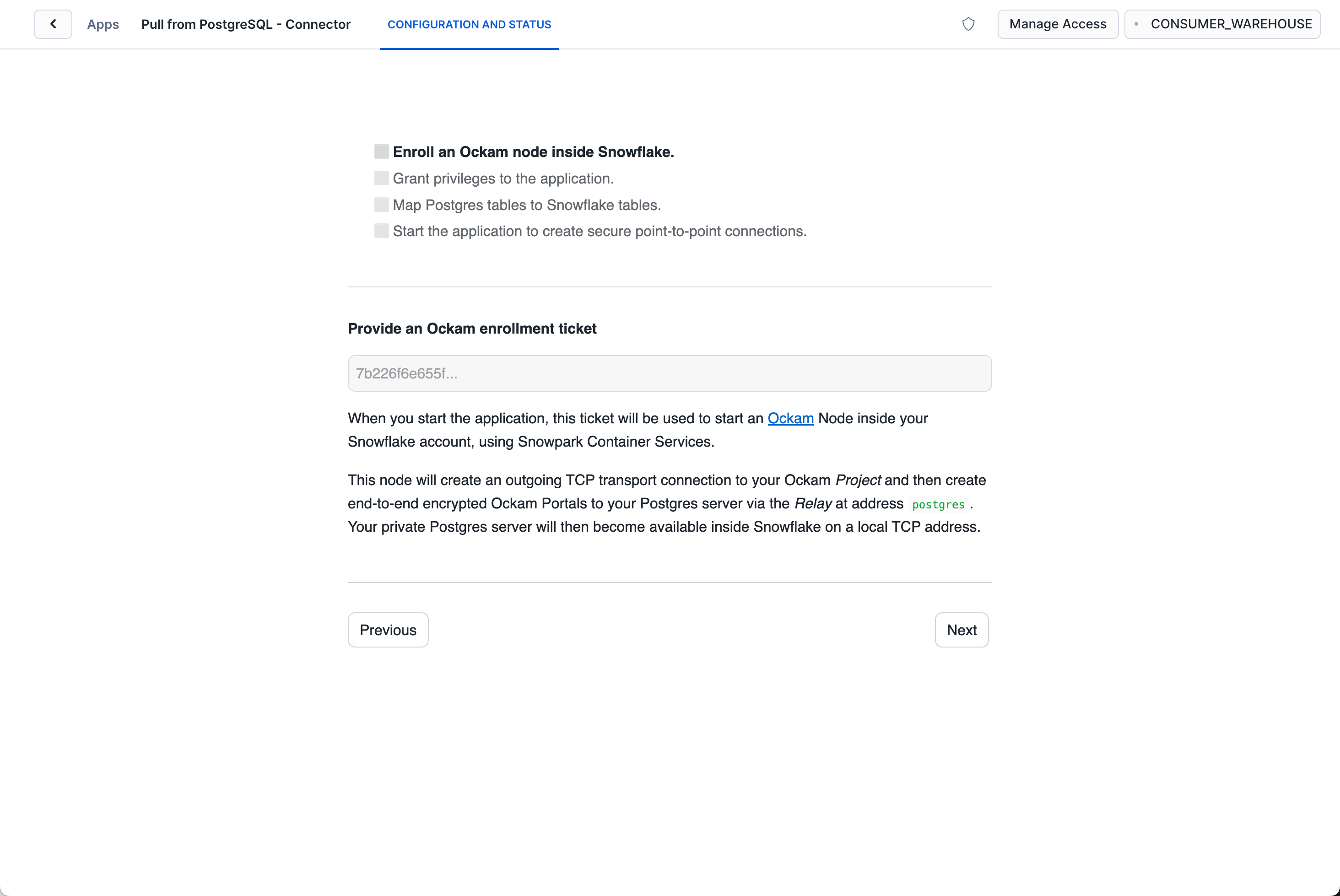

Configure connection details

Click "Get started" to open the Snowflake setup screen.

Take the contents of the file snowflake.ticket that we just created and paste

it into "Provide the above Enrollment Ticket" form field in the "Configure app"

setup screen in Snowflake.

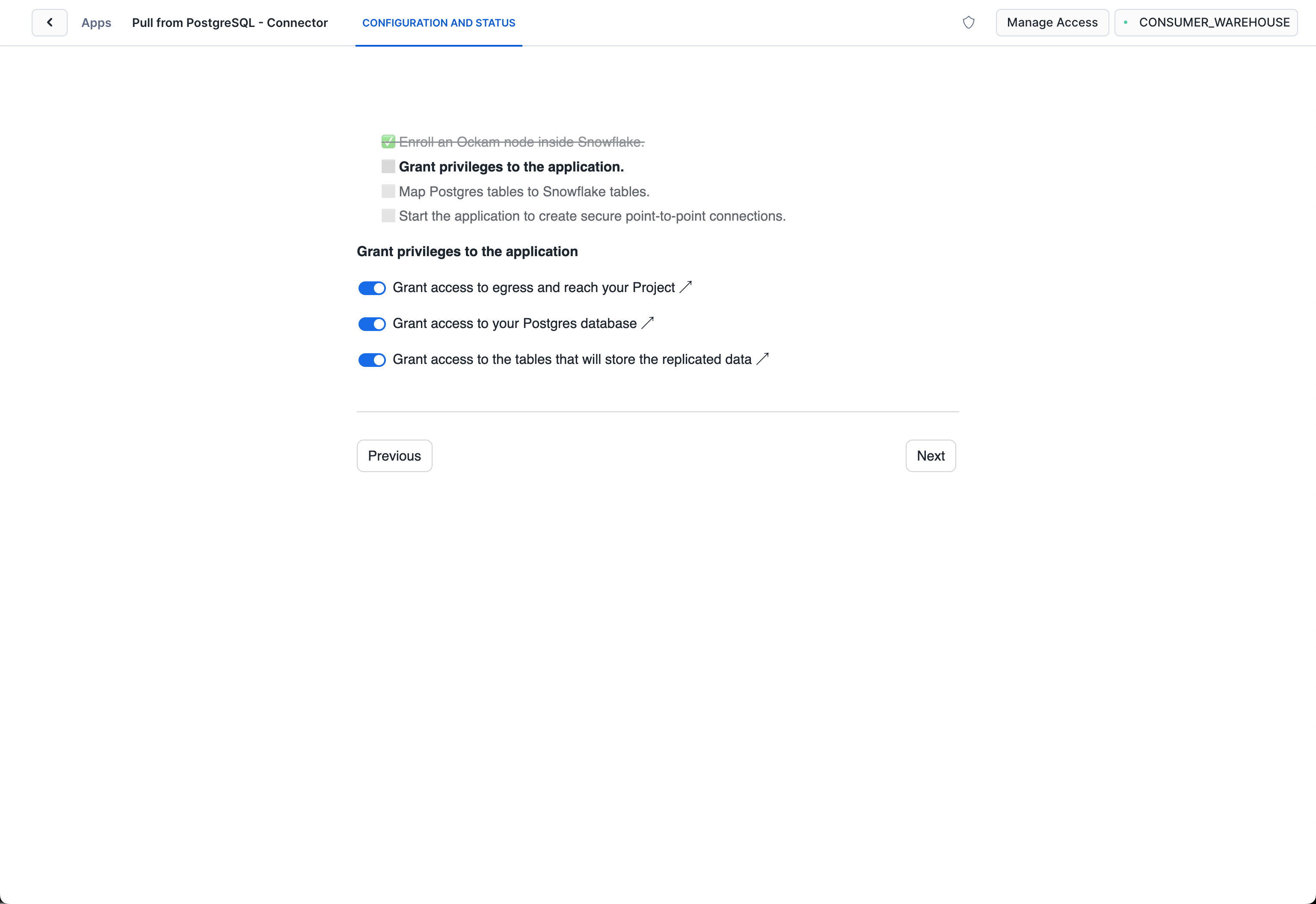

Grant privileges

To be able to authenticate with Ockam Orchestrator and then discover the route to our outlet, the Snowflake app needs to allow outbound connections to your Ockam project.

Toggle the Grant access to egress and reach your Project and approve the connection by

pressing Connect.

Toggle the Grant access to your postgre database and enter the username and password for your

PostgreSQL database and store it as a secret in snowflake.

Toggle the Grant access to the tables that will store the replicated data and select the table(s) that will store the replicated data from Snowflake.

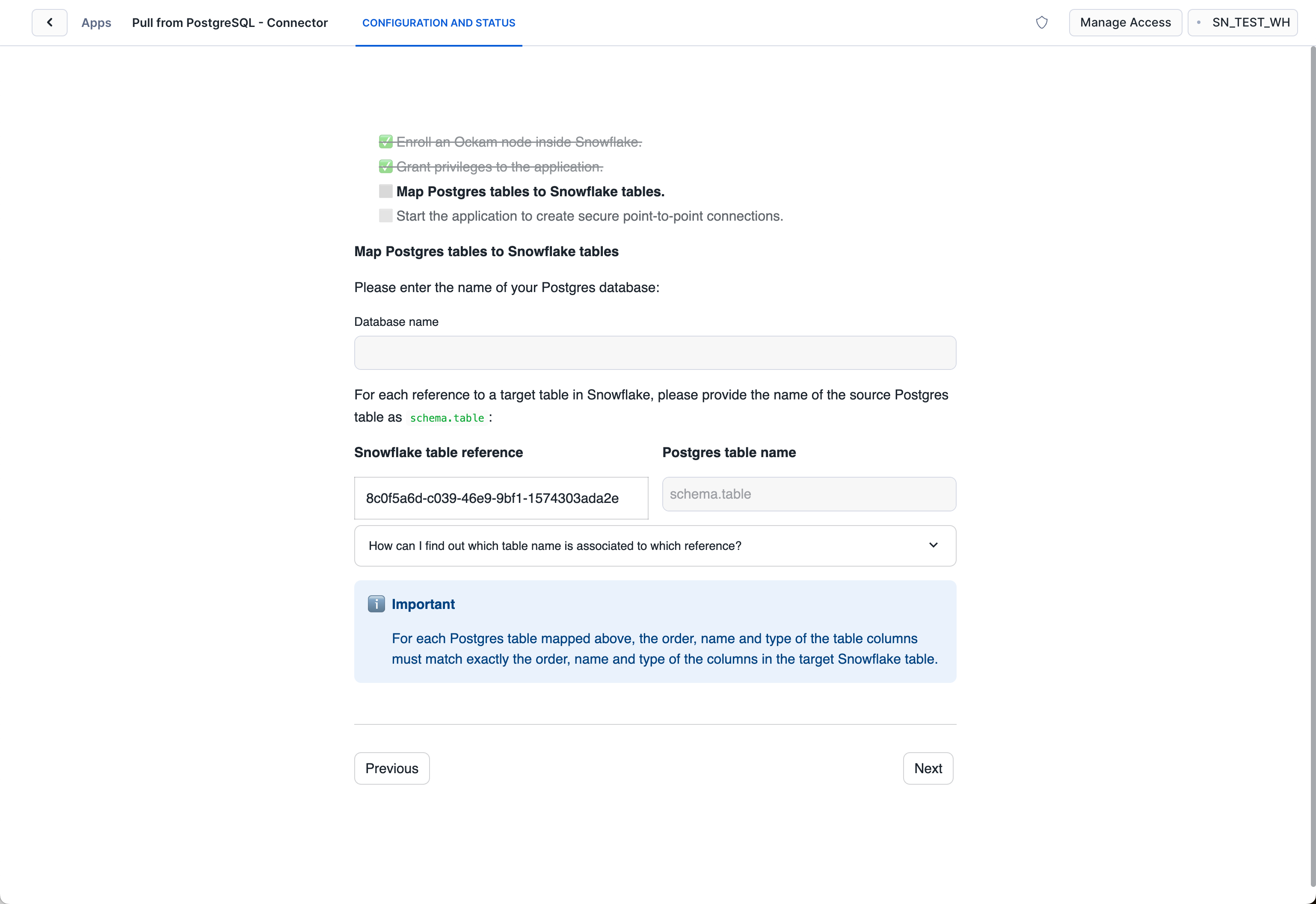

Map Snowflake stream to PostgreSQL table

Snowflake receives each stream of changes to a table in PostgreSQL database, and we need to define the database and mapping between each. Enter the name of the tables in PostgreSQL matching with the tables in Snowflake.

Table with same schema should exist in PostgreSQL database as well as snowflake.

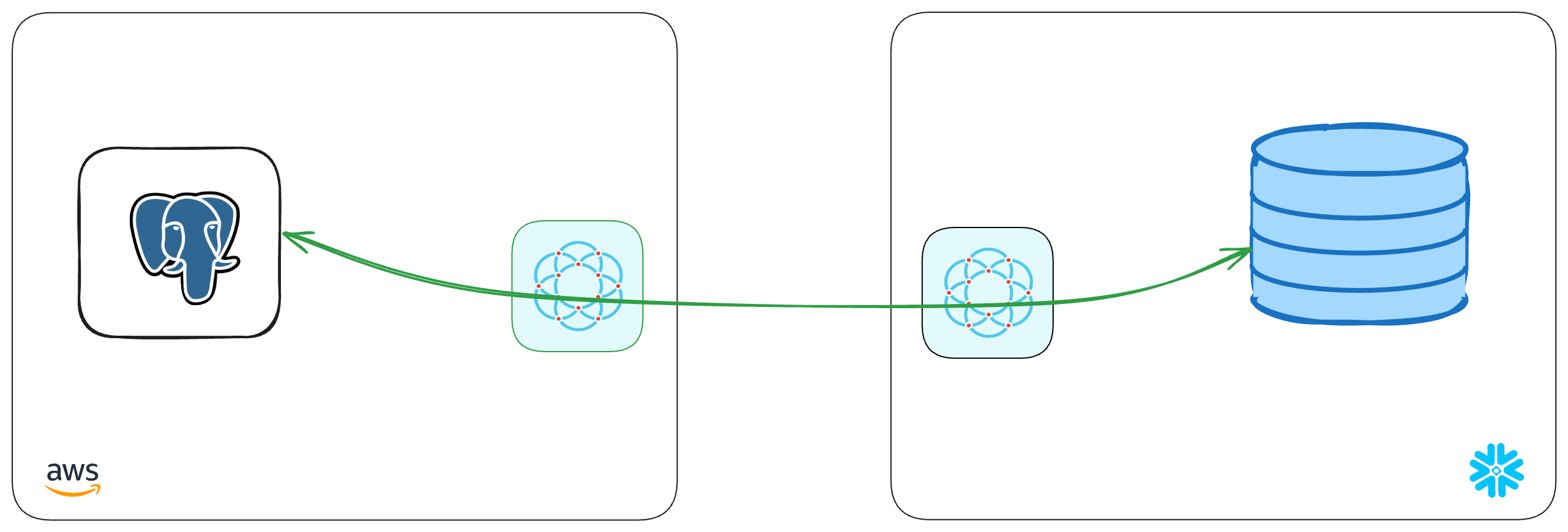

With that, we've completed the last step in the setup. We've now got a complete point-to-point connection that allows our Snowflake warehouse to securely pull data through to our private PostgreSQL database.

Next steps

Any updates to your data in your PostgreSQL table will now create a new record in your Snowflake table, which are then sent over your Ockam portal. To see it in action insert a row into your PostgreSQL table, then use your usual SQL tooling to see the record arrive in your Snowflake table.

If you'd like to explore some other capabilities of Ockam I'd recommend:

- Creating private point-to-point connections with any database

- Adding security as a feature in your SaaS product

Previous Article

Build completely private APIs in Snowflake

Next Article

Real-Time CDC Pipelines from Snowflake to PostgreSQL